Last Fall, I ate my own cooking, put my money where my mouth is. After my Postdoctoral Fellowship at Georgia Tech, I transited to a research affiliate role at the Institute and I decided not to take a new employment.

About a year ago now, I stumbled on a LinkedIn post, where a pertinent question was asked, just before a brief articulation of the obvious incursion of AI tools into knowledge work. The fellow wrote, “What’s left in research when so many things can be done by AI? 🤔 …”

Some people who might have found that take a bit hyperbolic at the time might not anymore, particularly if they have tried tools like CodeScientist, Autodiscovery, and Kosmos, to mention just a few.

My response was as follows:

"A researcher could, and probably should, deliberately increase their breadth (and depth) of knowledge/competency. Many aspects of research will be aggressively automated over the next few years (This is true for all knowledge work) So, insights (reads: your knowledge bank) would be ultimately highly priced in a world where some research can be done quasi-on-demand. And the 'insights' are going to scale to the extent to which one leverages AI; you probably don't want to be the third servant, in the Parable of Talents who buries the one talent received into the ground, and ends up with nothing. And this parable maps to what we will be seeing going forward. 5 to 10, 2 to 4, and so on. The talent is your knowledge of what you know is possible. The (research) questions you ask. It's probably the best time to be a generalist."

Today, my response could really use some workshopping, but that’s not the reason for this essay. The only thing I would add is that, as a hypothesis, we are approaching even more pronounced barbell territory: hyperspecialist or (hyper)generalist. A plausible scenario is that everything in the middle will be gobbled up by AI. We can argue about timeframes, but again, that isn’t the purpose of this essay. This essay is, in part, an attempt to show what acting on that hypothesis looks like in practice.

After my fellowship, I passed on a couple of opportunities that would have been great in the pre-ChatGPT era (we really need a name that rolls off the tongue for this). But as I was saying, history is no longer “settled”; it’s moving fast — too fast in some domains. The future is profoundly uncertain, and precisely because of this, actions matter more than usual.

And it was principally the barbell and the “moving history” arguments that propelled me in this direction, in spite of the fact that the move ain’t cheap, to say the least.

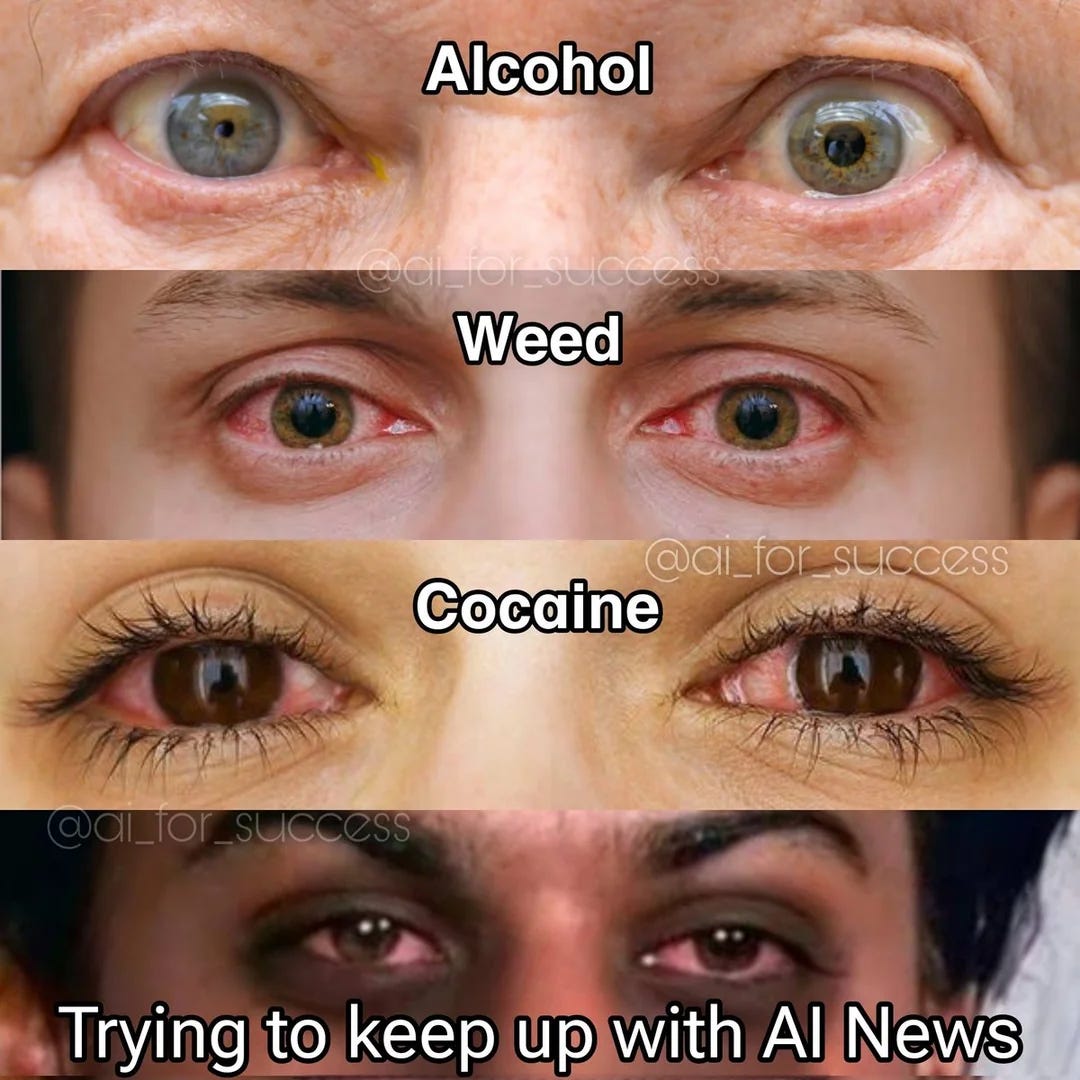

And now, with the privilege of hindsight, I realize I actually could have used some rest. The path through graduate school and a postdoc is fiercely intellectually stimulating, but undeniably stressful. Add the years spent navigating the U.S. immigration system as an international student, alongside the recent relentless pace of tracking the AI disruption like a hawk, and stepping back makes some sense.

I have had a fairly eclectic academic journey so far, perhaps this exposes my generalist bias. I hold a PhD in Biochemistry and Molecular Biology. I did some work in yeast genomics and studied Caenorhabditis elegans early in grad school. As a senior graduate student and postdoc, I published several works on applied AI/ML in biomedicine.

One of the main ‘skill issues’ I’ve had over the past couple of years (and for the engineers reading, that pun is fully intended) arose simply by virtue of being in a research environment. It was the ability to take an idea and turn it into production-level software with all the bells and whistles. I could do bits and pieces, but not the full gamut.

To solve this, the core challenge of my break became building intelligent systems end-to-end: models, agents, backend infrastructure, and user experience. What people now call full-stack AI/agent engineering. It felt like a reasonable way to expand my wingspan. I was already adjacent to the field through a number of side projects, so this seemed like a natural escalation. It was a sufficiently hard problem, and increasingly, an important one, since the next iteration of knowledge work is clearly automation (for better or for worse). And how long the skillset itself will be “useful” simply depends on which Substack newsletter you read, or which AI lab CEO you listen to.

However, as I hinted earlier, my break soon turned into a much-needed respite. I worked less — by virtue of being more focused (and leveraging AI) — read more books, played video games, and exercised much more.

I’ll give an example. All my life, I had wrongly assumed I was not a good long-distance runner, despite being, at least by American standards, very active. These days, I run 10 kilometers most days of the week. (It’s crazy how we tell ourselves we are not good at things we simply haven’t tried enough.) Along the way, I built a holistic, bespoke personal operating system from the ground up, one that incorporates work, learning, exercise, rest, and more.

However, one of the main projects I am working, now close to an alpha launch, is an experiment to test a hypothesis on what knowledge navigation, consumption, and collaboration might look like in a world where research is commodified.

Over the next weeks and months, I will share more artifacts (essays, apps, videos) publicly, and I would be glad to hear your feedback. I will share my “in-the-trenches” micro-updates mostly on Substack Notes, and I will also collect them here. General essays will be on Episteme Engine, while technical AI and engineering deep dives will be on my AI blog — The Epsilon. Video updates will be shared here as well.

Calling this a career break is somewhat of a misnomer, more like building through an AI transition (admittedly, that doesn’t roll off the tongue either). The work didn’t stop; it changed shape. Whether this turns out to have been the right call is still an open question. But even if the barbell hypothesis is wrong (which I strongly doubt), a wider wingspan is not the worst thing to have built.

P.S.

If you are an experienced engineer, especially in the United States, looking for a new AI adventure around (scientific) research, biomedicine, or science education, feel free to reach out to discuss the possibility of teaming up. I have far too many projects and feature ideas, and too little time.

I am considering publishing a full length memoir essay at some point in the future, structured as a sequel to “Plans Are Overrated“, express your interest here.